Are you a journalist? Please sign up here for our press releases

Subscribe to our monthly newsletter:

We do not merely recognize objects – our brain is so good at this task that we can automatically supply the concept of a cup when shown a photo of a curved handle or identify a face from just an ear or nose. Neurobiologists, computer scientists, and robotics engineers are all interested in understanding how such recognition works – in both human and computer vision systems. New research by scientists at the Weizmann Institute of Science and the Massachusetts Institute of Technology (MIT) suggests that there is an “atomic” unit of recognition – a minimum amount of information an image must contain for recognition to occur. The study’s findings, which recently appeared in the Proceedings of the National Academy of Sciences (PNAS), imply that current models need to be adjusted, and they have implications for the design of computer and robot vision.

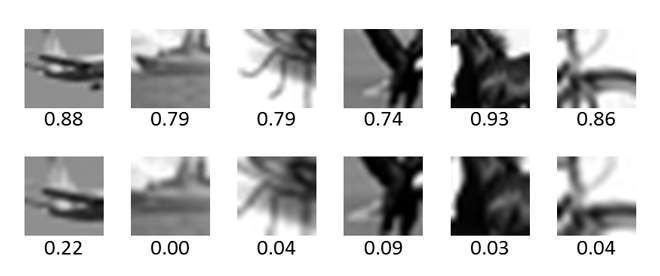

In the field of computer vision, for example, the ability to recognize an object in an image has been a challenge for computer and artificial intelligence researchers. Prof. Shimon Ullman and Dr. Daniel Harari, together with Liav Assif and Ethan Fetaya, wanted to know how well current models of computer vision are able to reproduce the capacities of the human brain. To this end they enlisted thousands of participants from Amazon’s Mechanical Turk and had them identify series of images. The images came in several formats: Some were successively cut from larger images, revealing less and less of the original. Others had successive reductions in resolution, with accompanying reductions in detail.

When the scientists compared the scores of the human subjects with those of the computer models, they found that humans were much better at identifying partial- or low-resolution images. The comparison suggested that the differences were also qualitative: Almost all the human participants were successful at identifying the objects in the various images, up to a fairly high loss of detail – after which, nearly everyone stumbled at the exact same point. The division was so sharp, the scientists termed it a “phase transition.” “If an already minimal image loses just a minute amount of detail, everybody suddenly loses the ability to identify the object,” says Ullman. “That hints that no matter what our life experience or training, object recognition is hardwired and works the same in all of us.”

The researchers suggest that the differences between computer and human capabilities lie in the fact that computer algorithms adopt a “bottom-up” approach that moves from simple features to complex ones. Human brains, on the other hand, work in “bottom-up” and “top-down” modes simultaneously, by comparing the elements in an image to a sort of model stored in their memory banks.

The findings also suggest there may be something elemental in our brains that is tuned to work with a minimal amount – a basic “atom” – of information. That elemental quantity may be crucial to our recognition abilities, and incorporating it into current models could improve their sensitivity. These “atoms of recognition” could prove valuable tools for further research into the workings of the human brain and for developing new computer and robotic vision systems. For more on "atoms of recognition," visit the website.

Prof. Shimon Ullman is the incumbent of the Ruth and Samy Cohn Professorial Chair of Computer Sciences.