Rats use a sense that humans don’t: whisking. They move their facial whiskers back and forth about eight times a second to locate objects in their environment. Could humans acquire this sense? And if they can, what could understanding the process of adapting to new sensory input tell us about how humans normally sense? At the Weizmann Institute, researchers explored these questions by attaching plastic “whiskers” to the fingers of blindfolded volunteers and asking them to carry out a location task. The findings, which recently appeared in the Journal of Neuroscience, have yielded new insight into the process of sensing, and they may point to new avenues in developing aids for the blind.

The scientific team, including Drs. Avraham Saig and Goren Gordon, and Eldad Assa in the

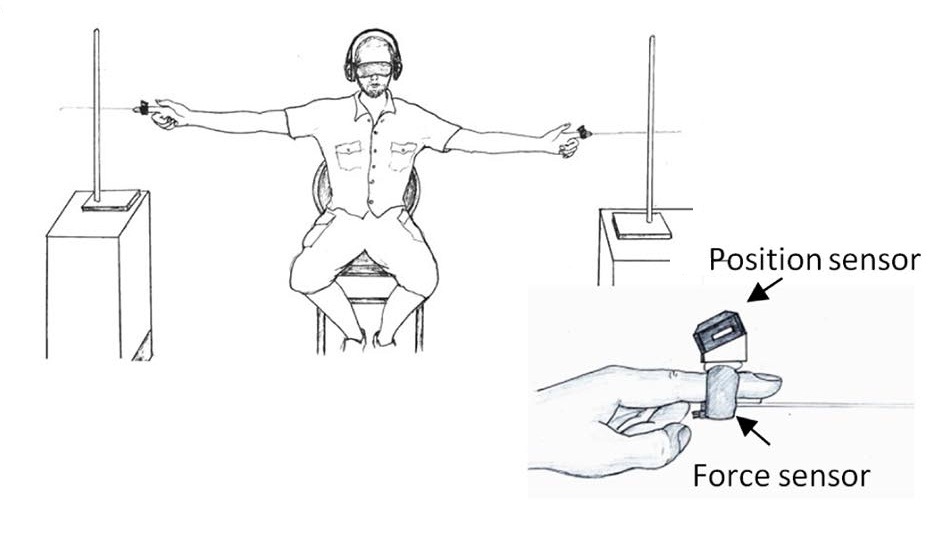

group of Prof. Ehud Ahissar and Dr. Amos Arieli, all of the Neurobiology Department attached a “whisker” – a 30 cm-long elastic “hair” with position and force sensors on its base – to the index finger of each hand of a blindfolded subject. Then two poles were placed at arm’s distance on either side and slightly to the front of the seated subject, with one a bit farther back than the other. Using just their whiskers, the subjects were challenged to figure out which pole – left or right – was the back one. As the experiment continued, the displacement between front and back poles was reduced, up to the point when the subject could no longer distinguish front from back.

On the first day of the experiment, subjects picked up the new sense so well that they could correctly identify a pole that was set back by only eight cm. An analysis of the data revealed that the subjects did this by figuring the spatial information from the sensory timing. That is, moving their bewhiskered hands together, they could determine which pole was the back one because the whisker on that hand made contact earlier.

When they repeated the testing the next day, the researchers discovered that the subjects had improved their whisking skills significantly: The average sensory threshold went down to just three cm, with some being able to sense a displacement of just one cm. Interestingly, the ability of the subjects to sense time differences had not changed over the two days. Rather, they had improved in the motor aspects of their whisking strategies: Slowing down their hand motions – in effect lengthening the delay time – enabled them to sense a smaller spatial difference.

Saig: “We know that our senses are linked to muscles, for example ocular and hand muscles. In order to sense the texture of cloth, for example, we move our fingers across it, and to seeing stationary object, our eyes must be in constant motion. In this research, we see that changing our physical movements alone – without any corresponding change in the sensitivity of our senses – can be sufficient to sharpen our perception.”

Based on the experiments, the scientists created a statistical model to describe how the subjects updated their “world view” as they acquired new sensory information – up to the point at which they were confident enough to rely on that sense. The model, based on principles of information processing, could explain the number of whisking movements needed to arrive at the correct answer, as well as the pattern of scanning the subjects employed – a gradual change from long to short movements. With this strategy, the flow of information remains constant. “The experiment was conducted in a controlled manner, which allowed us direct access to all the relevant variables: hand motion, hand-pole contact and the reports of the subjects themselves,” says Gordon. “Not only was there a good fit between the theory and the experimental data, we obtained some useful quantitative information on the process of active sensing.”

“Both sight and touch are based on arrays of receptors that scan the outside world in an active manner,” says Ahissar, “Our findings reveal some new principles of active sensing, and show us that activating a new artificial sense in a ‘natural’ way can be very efficient.” Arieli adds: “Our vision for the future is to help blind people ‘see’ with their fingers. Small devices that translate video to mechanical stimulation, based on principles of active sensing that are common to vision and touch, could provide an intuitive, easily used sensory aid.”

Prof. Ehud Ahissar’s research is supported by the Murray H. and Meyer Grodetsky Center for Research of Higher Brain Functions; the Jeanne and Joseph Nissim Foundation for Life Sciences Research; the Kahn Family Research Center for Systems Biology of the Human Cell; Lord David Alliance, CBE; the Berlin Family Foundation; Jack and Lenore Lowenthal, Brooklyn, NY; Research in Memory of Irving Bieber, M.D. and Toby Bieber, M.D.; the Harris Foundation for Brain Research; and the Joseph D. Shane Fund for Neurosciences. Prof. Ahissar is the incumbent of the Helen Diller Family Professorial Chair in Neurobiology.