Can computers see? Can they be taught to discern a polar bear on white snow? To tell where one spotted Dalmatian ends and another begins? To recognize a woman’s profile after viewing her face-on? Seemingly simple visual tasks that a human being takes for granted pose an enormous challenge to a computer: Every minor variation in lighting or angle interferes with the computer’s ability to perceive and identify objects. Living brains solve these problems thanks to amazing powers; computer scientists are working hard to endow machines with similar abilities.

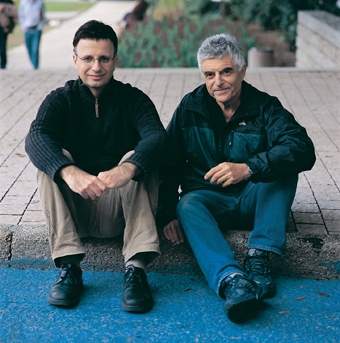

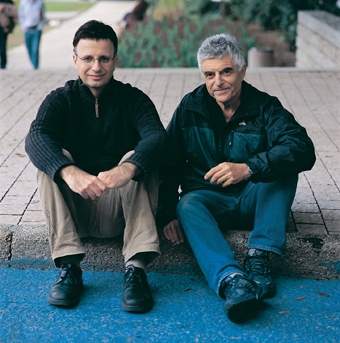

A unique, innovative approach enabling computers to identify and recognize objects was developed by Dr. Eitan Sharon when he was a graduate student under the guidance of Profs. Ronen Basri and Achi Brandt of the Weizmann Institute’s Computer Science and Applied Mathematics Department, in collaboration with departmental colleague Dr. Meirav Galun and Dr. Dahlia Sharon of the Massachusetts General Hospital. The approach involves a multi-stage process that works from the bottom up.

The computer starts by comparing the individual pixels in an image and divides them into groups according to the degree of light intensity. The resulting groups go through a series of additional comparisons using such properties as texture, shape and so on. Groups that share common features are combined into increasingly larger segments, and at each stage the parameters for comparison become more numerous and complex. At the end of this process, known as segmentation, the computer can distinguish an object from its background.

After obtaining good results at this stage, the scientists moved on to the next challenge – object recognition. As in a children’s “find the hidden object” game, the computer was instructed to scan through a large database and pick out an image of a pair of glasses identical to a pair it was shown. To perform this task, the computer divided a picture of the glasses into segments and compared these with all the glasses segments in the database, searching for examples whose features matched those of the original pair. At the end of the process, it succeeded in finding the identical glasses, even when these appeared at a different angle or in a slightly different way from the original pair. The method was recently described in the journal Nature.

Now that the computer is capable of recognizing objects, can it be put to medical use – recognizing signs of disease? To test this idea, which has been explored by researchers around the world in a variety of ways, the Institute scientists defined a goal: identifying lesions in the brains of patients with multiple sclerosis. In these patients, the protective myelin sheath covering the nerve fibers is damaged, and this damage shows up in magnetic resonance imaging (MRI). The imaging provides the physician with a series of brain sections, which today must all be reviewed by a human being. “In the distant future, we hope that a computer program will be able to recognize the damaged areas independently,” says Basri. “In the more immediate future, the system should be able to help the physician identify these areas and provide information about their location and size, so as to be able to evaluate the patient’s condition or the effectiveness of treatment.”

With the help of Prof. Moshe Gomori, a radiologist from Hadassah University Hospital in Jerusalem, the scientists made a number of adjustments to the computer program, enabling it to analyze a three-dimensional image constructed from all the MRI section scans. Data processing then followed the same stages as object recognition: The picture of the brain was divided into segments, and each segment was characterized by a set of features defined by expert radiologists: light intensity, texture, shape and location in the brain. Then followed classification: The computer examined different segments and decided whether an area was healthy or damaged. Decision making involved a learning process: The computer reviewed pictures of brain areas affected by multiple sclerosis and gradually learned to characterize them.

The first experiments have produced encouraging results: 60 to 70 percent of areas labeled by the computer as affected matched the physician’s assessment – a percentage similar to the degree of agreement between two physicians. Results of the study, performed by Ayelet Akselrod-Ballin, a graduate student of Basri and Brandt, in collaboration with Italian colleagues, were published in the Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition.

The Weizmann Institute method has great potential for the diagnosis of any disease or disorder that produces signs visible through MRI or computed tomography scanning. The scientists are already working on additional applications, including the development of a program to identify liver tumors.

Now that they’ve taught the computer to see, Institute scientists hope to turn it into a multidisciplinary doctor’s aide that will be found in every clinic, assisting in the diagnosis and follow-up of a variety of ills. Once this vision is realized, they will only need to teach the computer to show a little empathy and tell the patient to say “Ah!”

Prof. Ronen Basri’s research is supported by the A.M.N. Fund for the Promotion of Science, Culture and Arts in Israel.

Prof. Achi Brandt’s research is supported by the Philip M. Klutznick Fund for Research. Prof. Brandt is the incumbent of the Elaine and Bram Goldsmith Professorial Chair of Applied Mathematics.