10-08-2011

Dr. Elad Schneidman of the Weizmann Institute’s Neurobiology Department likens studying how neurons in the brain communicate to learning a new language just by listening to a native speaker. At first the task seems insurmountable, but little by little we begin to pick up on basic words and phrases that repeat themselves. By the time we understand a thousand or so words, we also have a primitive grasp of the grammar and can incorporate new words as we learn them.

Much of our knowledge about the brain has come from studying the “letters” or even “words” of single neuron activity, usually by measuring the electrical spikes of single neurons or neuron pairs in experiments. This is a bit like trying to understand an entire lecture from hearing a handful of random words. The really interesting “conversation,” says Schneidman, arises between larger groups of neurons. To get at the underlying rules governing neural communication and collective behavior, he looks at activity patterns in networks of around one hundred neurons and tries to understand their interactions.

Few studies deal with the detailed nature of such large groups of neurons. Aside from the experimental challenge, the difficulty is that even 100 neurons present a huge abundance of possible activity patterns – on the order of 1030 . Extracting meaningful information from such a network would seem to be an impractical proposition, at best.

Schneidman and his research student Elad Ganmor, together with Dr. Ronen Segev of Ben-Gurion University of the Negev, approached the problem by

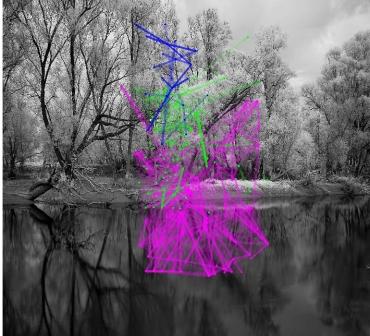

combining experimentation and mathematical modeling. The experiments involved fully functional pieces of salamander retina. In each two-millimeter square of tissue, around 100 nerve cells could be reliably recorded. The researchers showed these retina patches film clips of natural scenes and watched as the neurons fired off messages in short spikes of electricity. “These retinal neuron spikes,” says Schneidman, “are the output of the ‘computation’ that the retina performs on the visual input, which would then be sent to the brain. The retina is thus an extension of the brain, and its cellular communication is the same as that of brain cells. We can see unique activity patterns emerge from the ‘chatter’ as the network is exposed to the different scenes. Interestingly, the patterns we see in the retina networks have a specific ‘grammar’ that appears to hold only for natural scenes; not for white noise movies or other unnatural images shown to them.”

To reveal some of the ground rules for neuron activity, the scientists used a mathematical model similar to one commonly used in physics, where it was developed to study the behavior of large numbers of magnets in magnetic fields. An equivalent model is also used in statistics and in machine learning. In all these domains, complex behavior arises from pair-wise interactions between elements – attraction and repulsion in magnets, on and off states in binary variables, firing and silence in neurons. When the scientists first applied the model to small networks, it fit perfectly. Even for large networks, the experimental data seemed to fit fairly well, except for a few points that fell off the scale. Upon closer inspection, however, they realized that the data points that didn’t conform to the model belonged to the most frequently occurring activity patterns, and these reflected more complex grammar. In particular, they reflected dependencies between cells that could not be explained by pair relations alone. As a result, their model was good at predicting the rare “phrases” but less accurate when it came to the common ones. But just as we must learn to say: “I am hungry,” before we progress to ordering a full meal with wine, side dishes and dessert in a restaurant, Schneidman and his team realized that they couldn’t ignore the everyday expressions used in brain communication if they wanted to get a feel for its language. Their challenge was to find a way to deal with the common patterns and the rare ones at the same time.

Surprisingly, making a small change in the mathematical formula led to a very simple and accurate way to infer the grammar of this complex system. The original physics equation encodes the interactions between magnets with the numbers 1 and -1. Instead of representing a silent neuron with a -1 – as though it were a negative magnetic pole – they used a 0. While this may seem to be a mere “accounting” issue, it has a profound effect on the formula’s terms in one special case: that in which the elements in the network are only rarely active. This is exactly the situation in the brain: Most neurons are silent most of the time. Suddenly the common phrases could be interpreted, revealing fundamental interactions between neurons. Differences among the various phrases also enabled the researchers to infer different rules from each of the common patterns.

In fact, from an incredibly complex network of possible interactions, the researchers obtained a picture of basic neural communication that is decidedly sparse, yet extremely accurate. “We could assemble a basic grammar of millions of activity patterns from about 500 common phrases that rely on two-, three- and four-way connections – provided we knew which examples to choose,” says Schneidman. “The grammar of neuron networks is eminently learnable.” He thinks it may be learnable precisely because it seems to work like language. The common, constantly repeated phrases may be the way that neurons gain their communications skills and continue to understand one another.

With this new insight into the nature of neural communication, the researchers were able to decode the visual information carried by large groups of retinal cells. Schneidman believes that this new approach could enable us to obtain a detailed picture of the workings of large groups of neurons in different parts of the brain. This might, eventually, lead the way to reading the information encoded in such networks and point to new approaches to treating neurological disorders.

Dr. Elad Schneidman's research is supported by the Jeanne and Joseph Nissim Foundation for Life Sciences Research; the Peter and Patricia Gruber Award; and the J&R Foundation.